The ICAD Principles

Introducing ICAD

From FAIR Data to Actionable Intelligence

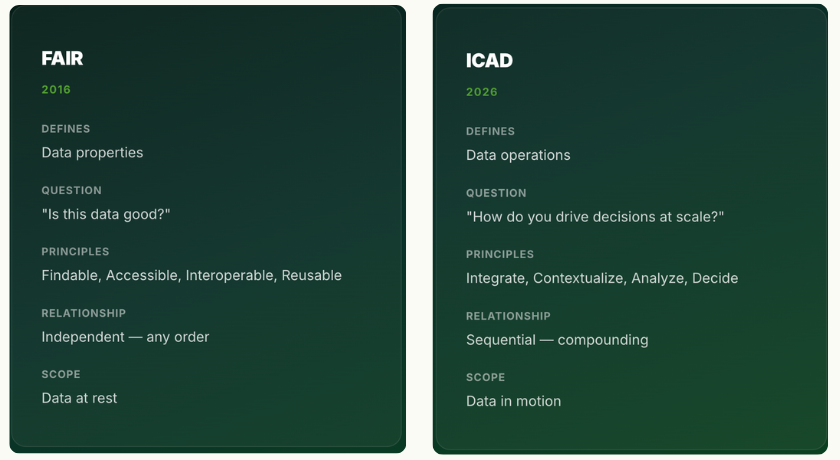

FAIR (2016)

The FAIR principles defined what good data looks like. They answered: can this data be found? Can it be accessed? Can it interoperate with other data? Can it be reused? These are properties of data at rest — criteria for data quality, not instructions for data action.

The Gap

FAIR does not address what happens next. How does raw instrument output become a governed, contextualized, analyzable dataset that AI can act on? FAIR assumes the infrastructure exists. In most pharma R&D organizations, it does not.

ICAD (2026) defines the operational sequence. Four steps, each building on the last. Not properties of data, but operations on data. Not a checklist — a compounding sequence.

Connect every instrument to a governed pipeline.

- I1. Every instrument output is captured at the point of generation — not exported manually, not aggregated after the fact.

- I2. Raw data is preserved in its native format with full provenance to source system, timestamp, and operator.

- I3. Integration is industrialized — each build creates a reusable asset that reduces the cost and time of the next integration.

- I4. New instruments are onboarded in days, not months — the integration pipeline is a factory, not a project.

What it enables

Instrument data from any vendor, any format, any site enters a governed pipeline — eliminating manual exports, CSV transfers, and site-specific data silos. Each new instrument connected enriches the dataset available to every downstream step in the sequence.

Add scientific meaning to raw data.

- C1. Data is linked to its scientific context — method, sample, experiment, study, program, and regulatory submission.

- C2. Master data is reconciled across sites, systems, and naming conventions — one identity per entity, globally.

- C3. Lineage traces every data point from instrument through transformation to decision, with no gaps.

- C4. Context is machine-readable — not trapped in PDF reports, Electronic Laboratory Notebook (ELN) narratives, or spreadsheet column headers.

What it enables

Scientists query across programs, sites, and therapeutic areas using scientific terms — not system identifiers. Regulatory teams trace any data point from submission back to the originating instrument run. Master data reconciliation eliminates the “same compound, five names” problem that plagues multi-site R&D.

Generate intelligence across programs and sites.

- A1. Analysis operates on contextualized data, never on raw instrument output alone — context is a prerequisite.

- A2. Cross-program comparisons are governed — same method, same context rules, same statistical framework.

- A3. Statistical models are traceable to their training data with full provenance — no black-box analytics.

- A4. Insights are reproducible — any analyst, any site, same governed dataset produces the same result.

What it enables

Out-of-specification (OOS) investigations that previously took weeks complete in hours because root-cause analysis operates on governed, cross-referenced data. Method transfers between sites carry full lineage. Stability trending spans programs and geographies. Analytical method comparisons are reproducible by any qualified analyst at any site.

Act with AI grounded in governed data.

- D1. AI operates only on data that has passed through I→C→A — never on ungoverned, decontextualized inputs.

- D2. Every AI recommendation is traceable to its source data, analytical provenance, and decision logic.

- D3. Autonomy is configurable per decision type — from fully autonomous execution for routine operations to human-in-the-loop approval where regulatory classification or risk profile requires it.

- D4. Decisions feed back into the sequence — improving integration targets, context models, and analytical frameworks.

What it enables

Investigational New Drug (IND) application compilation draws on governed data across disciplines — no manual assembly from disconnected systems. Chemistry, Manufacturing, and Controls (CMC) sections reference live, traceable analytical data. Laboratory workflows close the loop from experiment design through execution to result — with configurable autonomy that scales from full automation for routine operations to human-gated approval where Good Practice (GxP) classification requires it. Shelf-life predictions and trend analyses operate on complete, multi-program datasets rather than single-study snapshots.

Each Step Makes the Next One More Valuable

FAIR principles are independent — you can make data Findable without making it Accessible. ICAD principles are sequential and compounding.

Integration that has been contextualized is exponentially more valuable than raw integration. Analysis built on contextualized data is exponentially more reliable than analysis on raw feeds. Decisions from governed analysis are exponentially more trustworthy than decisions from ungoverned models.

This is why integration 50 makes the entire dataset more valuable than integration 1 did — because every new data source enriches the context, sharpens the analysis, and improves the decisions. And this is why AI without governed data is guesswork — you skipped three steps.

Complementary Principles